Quick Look

Grade Level: 12 (11-12)

Time Required: 45 minutes

Lesson Dependency:

Subject Areas: Computer Science

Summary

Students are introduced to the Robotics Peripheral Vision Grand Challenge question. They are asked to write journal responses to the question and brainstorm what information they require in order to answer the question. They share their ideas with the class and record them. Then, students share their ideas with each other and brainstorm any additional ideas. Next, students draw a basis for the average peripheral vision of humans and then compare that range to the range of two different focal lengths in a camera. Through the associated activity, students see the differences between human and computer vision.Engineering Connection

Robotics has a very strong connection to engineering. Two main phases are employed in making robots. The first phase is creation of the mechanical portion of the robot. This incorporates both design and implementation, which are both essential steps in every engineering field. The second phase is designing the human interfaces to interact with the robot. The key to a robot's usefulness is its software. This step is completed through programming by computer science and software engineers.

Computer vision is applicable in many areas. Computer science engineers collaborate with mechanical, electrical and other types of engineers to take digital image data and develop many different technologies, for example, x-ray images used in the medical field. A robot that "sees" could be used, for example, in unmanned vehicles or as a way to conduct visual surveillance for weather forecasting, dangerous exploration or national security. A robot meant to interact with humans would also benefit from being able to "see."

Learning Objectives

After this lesson, students should be able to:

- Explain briefly how human eye sees.

- Explain the Grand Challenge problem.

- List what information might be needed to answer the challenge.

- Group together similar areas of knowledge needed for the challenge.

- Visualize a general idea of what the solution program should do.

- State the average range of peripheral vision for a human being.

- Explain how focal lengths of lenses affect field of view.

- Find the ratio between focal lengths and image size.

- Explain how engineers apply this information to solve problems.

Educational Standards

Each Teach Engineering lesson or activity is correlated to one or more K-12 science,

technology, engineering or math (STEM) educational standards.

All 100,000+ K-12 STEM standards covered in Teach Engineering are collected, maintained and packaged by the Achievement Standards Network (ASN),

a project of D2L (www.achievementstandards.org).

In the ASN, standards are hierarchically structured: first by source; e.g., by state; within source by type; e.g., science or mathematics;

within type by subtype, then by grade, etc.

Each Teach Engineering lesson or activity is correlated to one or more K-12 science, technology, engineering or math (STEM) educational standards.

All 100,000+ K-12 STEM standards covered in Teach Engineering are collected, maintained and packaged by the Achievement Standards Network (ASN), a project of D2L (www.achievementstandards.org).

In the ASN, standards are hierarchically structured: first by source; e.g., by state; within source by type; e.g., science or mathematics; within type by subtype, then by grade, etc.

International Technology and Engineering Educators Association - Technology

-

Assess how similarities and differences among scientific, mathematical, engineering, and technological knowledge and skills contributed to the design of a product or system.

(Grades

9 -

12)

More Details

Do you agree with this alignment?

State Standards

Tennessee - Science

-

Identify the structures and functions of the body's sensory organs.

(Grades

9 -

12)

More Details

Do you agree with this alignment?

-

Explore the optics of lenses.

(Grades

9 -

12)

More Details

Do you agree with this alignment?

Introduction/Motivation

(Present to the class the Grand Challenge through the following hypothetical storyline.)

"The Wall-e robotics firm thinks it has a unique and novel solution for getting a broader spectrum of usable data. Instead of using a single camera, they have mounted two cameras at different focal lengths on top of a robot. The first camera provides an up-close and detailed image (however, this image lacks surrounding data) and the second camera provides a broader view with less detail. However, they need this data to be usable by humans. Right now a human must look at two separate pictures. Could you somehow combine those images to simulate how a human's vision would focus in and out of the two focal lengths? How would you accomplish this task?"

Have you ever wondered how a human sees? Have you wondered about how human vision compares to how a robot gathers visual data? Once you see the connection, you will be able to develop ideas on how to simulate human vision in a computer interface.

(This lesson covers the Grand Challenge, Generate Ideas, and Multiple Perspectives phases of the legacy cycle. Its aim is to help students to deeply engage with the challenge question and become motivated to learn information. In the Generate Ideas section, students identify prior knowledge, so they feel that they have a starting place for the problem. Students get to hear everyone's ideas, so they know what their classmates know. This is also a time for teachers to identify misconceptions. During the Multiple Perspectives phase, students obtain more clues to the challenge question and see why this information is useful.

(After introducing the Grand Challenge, move on to the Generate Ideas part of the legacy cycle. Ask students to work independently to record in their journals their personal thoughts and ideas about the problem. Use one or more of the following questions to help students get started.)

- What do you think a robot really sees?

- How does human vision differ from a camera lens?

- What do you need to know more about?

- What do you already know that is relevant?

(Begin with the students working alone to record their own thoughts and ideas on the challenge. Circulate the room and assist any students who are drawing a blank. After a period of time, record students' thoughts on the classroom board, overhead projector or large notepad. Elicit get ideas from everyone and record all ideas. If the class is very large, have students pair up and share ideas, then get at least one idea from each team.

(For the Multiple Perspectives step, have students share their journal entries with each other. This has the potential to lead students in new directions.)

(The goal of all this brainstorming is to break the Grand Challenge down into three knowledge areas: how the human eye sees and how a lens sees, how images are composed on a computer, and how to manipulate computer images. If students do not think of these topics by themselves, ask leading questions to help them. Consider proposing the following questions throughout these first steps of the legacy cycle to direct and categorize students' thoughts.)

- How does a human being see?

- How does human vision compare to how a robot gathers visual data?

- How can we begin to develop ideas on how to simulate human vision in a computer interface?

Lesson Background and Concepts for Teachers

Information to include in the lecture:

How the Eye Works

1. First, light rays enter the eye through the cornea.

- 75% of light is bent upon entering the retina because of the high difference in optical density between air and the cornea.

- The cornea is about .5 mm thick at its center.

2. Light rays pass through the lens.

- The lens is transparent and can change in shape and curvature.

- The lens transforms in order to focus on both near and distant objects.

- Rounder and thicker lens for near objects.

- Flatter lens for distant objects.

- Light bends twice, both as the light enters and as it exits the lens.

3. Next, light hits the retina.

- The light becomes focused as it hits the retina differently depending upon the shape of the lens.

- Light-sensitive cells in the retina send signals through a range of cells and nerves to the brain.

4. Distant Vision

- Any light from beyond six feet approaches the eyes as nearly parallel rays.

- These rays can be focused by the cornea and at-rest lens.

5. Close Vision

- Light from nearer than six feet diverges as it approaches the eye.

- To accommodate this diverging light:

- The lens changes shape.

- The pupils constrict.

- The eyeballs converge (both turn towards the source of focus).

How a (Single Lens Reflex) Camera Works

1. Light is reflected through a single lens onto light-sensitive film that is chemically processed to produce a photograph.

2. Focusing on the film can be achieved at different distances by:

- Moving the lens closer to or further from the film.

- Closer to film for distant focus.

- Further from film for close focus.

- Changing the size (focal length) of the lens.

- Larger focal length for distant focus.

- Smaller focal length for close focus.

Real-World Engineering Connections

1. It is important for biomedical engineers to understand how the human eye and optical lenses work in order to be able to design technology to improve vision or simulate human vision.

2. Similarly, computer science engineers need to know about programming because computer science engineering involves both hardware and software development, and programming is essential to building or modifying any software.

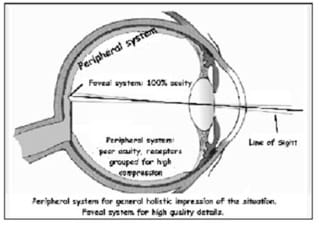

Peripheral Vision

1. Definitions:

- Peripheral vision is the outer part of the field of vision.

- Foveal vision is the central vision.

2. Peripheral vision happens on the outskirts of a person's vision. It can be nearer to or further from the center of vision and can be placed in the following subgroups:

- "far peripheral"

- "mid-peripheral"

- "near peripheral" (also called "paracentral")

3. Peripheral vision can be improved by practice. Jugglers do this in order to keep track of balls that are further away from their central vision.

4. Explain how to find the ratio between focal lengths and image size.

After lecture, conduct the Peripheral Vision Lab activity in which students test their vision and compare their peripheral vision to normal human peripheral vision. Then they compare their vision to that of vision through a camera lens.

Associated Activities

- Peripheral Vision Lab - Students test their vision and compare their peripheral vision to normal human peripheral vision. Then they compare their vision to that of vision through a camera lens.

Vocabulary/Definitions

cornea: A coating on eyeball; transparent to allow light through the pupil and focus it into the eye.

focal length: The distance between the point to which light rays are focused and the surface of the lens or mirror.

foveal vision: Central vision.

lens (camera): A piece of transparent material used to focus rays of light.

lens (eye): A transparent layer of the eye that focuses light on the retina.

peripheral vision: The outer part of the field of vision.

retina: A sensory membrane lining the back of the eye that receives an image and sends signals to the brain.

Assessment

Journaling: Review the journal entries that students created after being introduced to the Grand Challenge.

Subscribe

Get the inside scoop on all things Teach Engineering such as new site features, curriculum updates, video releases, and more by signing up for our newsletter!More Curriculum Like This

In this activity, students learn about the visual system and then conduct a model experiment to map the visual field response of a Panoptes robot.

Students examine the structure and function of the human eye, learning some amazing features about our eyes, which provide us with sight and an understanding of our surroundings. Students also learn about some common eye problems and the biomedical devices and medical procedures that resolve or help...

This unit leads students through a study of human vision and computer programming simulation. Students apply their previous knowledge of arrays and looping structures to implement a new concept of linked lists and RGB decomposition in order to solve the unit's Grand Challenge: writing a program to s...

Students explore their peripheral vision by reading large letters on index cards. Then they repeat the experiment while looking through camera lenses, first a lens with a smaller focal length and then a lens with a larger focal length.

References

"Computer Vision." Wikipedia, The Free Encyclopedia. Last updated June 30, 2009. Accessed June 30, 2009. http://en.wikipedia.org/w/index.php?title=Computer_vision&oldid=299441401

"Peripheral." Merriam-Webster Online Dictionary. Accessed July 10, 2009. http://www.merriam-webster.com/dictionary/peripheral

"Peripheral Vision." Wikipedia, The Free Encyclopedia. Last updated April 15, 2009. Accessed April 15, 2009. http://en.wikipedia.org/w/index.php?title=Peripheral_vision&oldid=284026167

Marieb, Elaine N. Human Anatomy & Physiology. 6th edition. San Francisco, CA: Pearson Education, Inc., 2004. pp. 570-71.

Davis, Bowman O., Noel Holtz and Judith C. Davis. Conceptual Human Physiology. Colombus, OH: Bell & Howell Co., 1985. pp. 203-04.

Copyright

© 2010 by Regents of the University of Colorado; original © 2010 Vanderbilt UniversityContributors

Mark Gonyea; Anna Goncharova; Rachelle KlingerSupporting Program

VU Bioengineering RET Program, School of Engineering, Vanderbilt UniversityAcknowledgements

The contents of this digital library curriculum were developed under National Science Foundation RET grant nos. 0338092 and 0742871. However, these contents do not necessarily represent the policies of the NSF, and you should not assume endorsement by the federal government.

Last modified: June 29, 2017

User Comments & Tips